Why Your AI Systems Fail in Production

Most AI failures aren't model problems. They're data engineering problems. This framework reveals the six layers of responsibility that determine whether your AI systems can be trusted at scale.

Download Free Framework

Join 100+ technology leaders

Data Engineering for AI Systems

17 pages · 30 day implementation guide included

17

Pages

6

Framework Layers

30

Day Playbook

100%

Actionable

Your AI works in the lab. Then it breaks in the real world.

Models that perform brilliantly in development degrade unexpectedly in production. Outcomes drift. Governance concerns surface too late. Teams retrain models and adopt new tools, only to watch the same problems reappear.

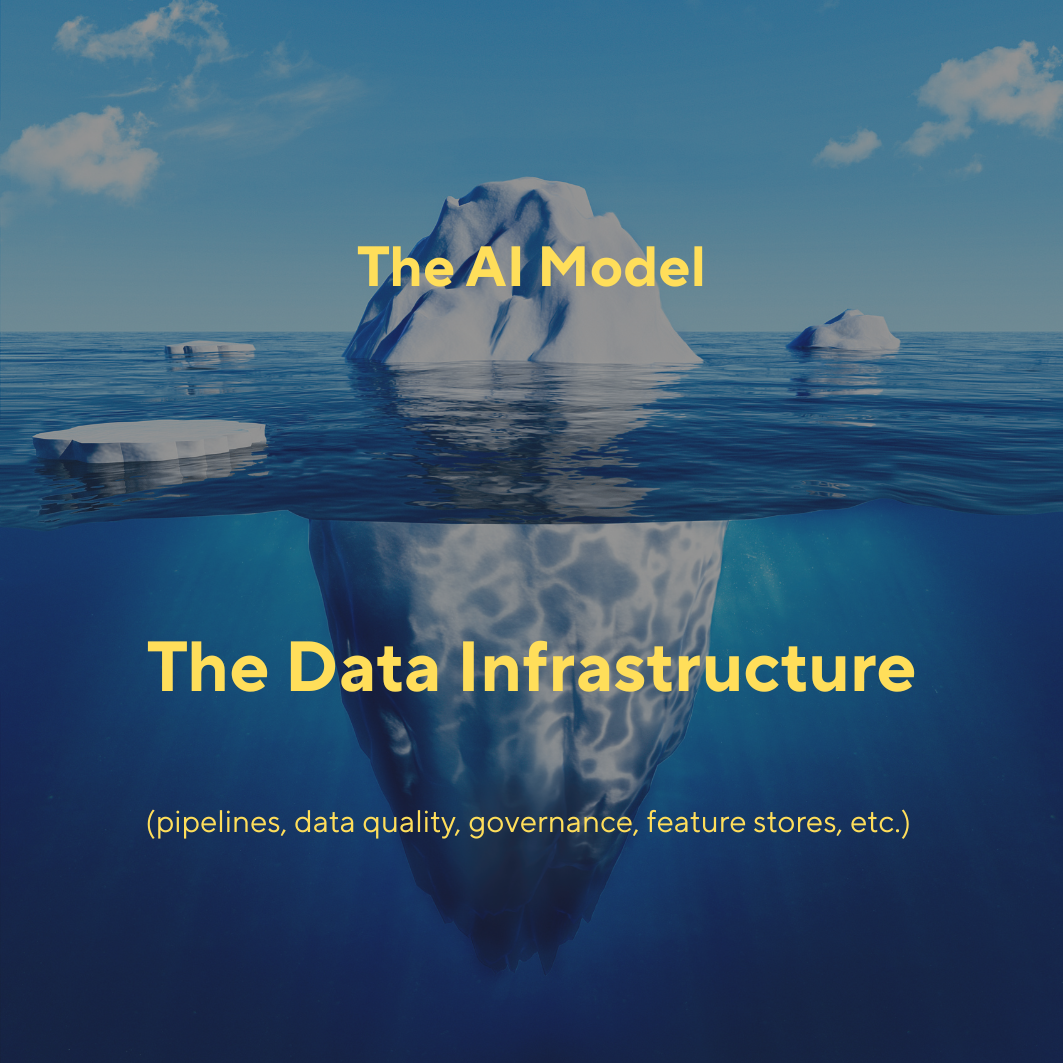

The frustrating truth? In most cases, the model isn't the problem.

AI systems fail because the data systems beneath them were never designed for AI driven decisions. Traditional data engineering evolved for analytics and reporting, where latency is tolerable and errors are investigated after the fact.

AI operates under fundamentally different constraints. And when assumptions break, these systems fail silently.

A Responsibility Based Framework for AI Reliability

The Data Engineering for AI Systems framework defines six layers that collectively determine whether your AI can be trusted in production.

-

1

Diagnose at the Source

Learn why failures originate in lower layers but surface as "model problems" and how to trace them back.

-

2

Assign Clear Ownership

Understand which teams own which responsibilities and eliminate the gaps where failures hide.

-

3

Engineer for Trust

Design data systems that make AI decisions traceable, accountable, and aligned with business goals.

-

4

Detect Before Damage

Build observability that catches silent degradation before it impacts customers or compliance.

Everything Inside the Whitepaper

Practical frameworks, diagnostic tools, and implementation guidance. Not theory.

Root Cause Analysis

Why AI failures almost always originate earlier in the data lifecycle than you think, and how to find them.

6 Layer Framework

A complete mental model for understanding how data, decisions, and outcomes connect in AI systems.

Anti Pattern Guide

The six most common mistakes organizations make when operationalizing AI and how to avoid each one.

Diagnostic Checklist

Questions to assess your current AI infrastructure and identify your weakest responsibility layers.

30 Day Playbook

Step by step guidance for applying the framework in your first month, focused on quick wins.

Measurement Framework

How to measure value, risk, and reliability beyond model accuracy. The metrics that actually matter.

Chris Seferlis

Director of Technology Strategy, Microsoft

Chris helps enterprise organizations navigate the intersection of data strategy, AI implementation, and organizational transformation. With experience spanning Fortune 500 companies and high growth startups, he specializes in making AI systems reliable at scale.

He also teaches at Boston University, where he brings real world case studies into the classroom to prepare the next generation of technology leaders.

Ready to Engineer AI That Actually Works?

Get the framework that helps technology leaders diagnose failures at their true point of origin and build data systems that hold up under real world conditions.